So Microsoft Face API, which is part of the Azure Cognitive Services, has just received an update that makes it much better at recognizing people with dark skin. Ordinarily, that will be a good thing, but right now, not so much! Here’s why.

Microsoft has gotten itself sunk deep into the current immigration politics in the U.S.A. It has been contracted by the U.S. Immigration and Customs Enforcement (ICE), but even though the company has come out publicly condemning the separation of families at the border, as far as some members of the public are concerned, that’s too-little, too-late. The damage has already been done.

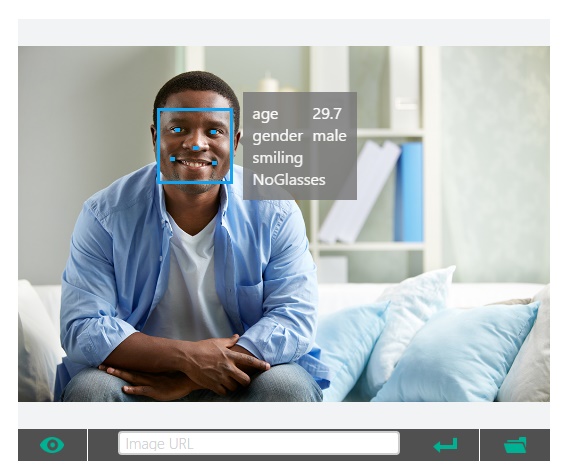

Be that as it may, the update to the Microsoft’s Face API, makes facial recognition of people with darker skim more accurate compared to the previous iterations of the technology. The update reduces the error rates in the identification of darker-skinned men and women by 20%, while at the same time reducing general error rates for all women by 9%.

In a blog post announcing the update, Microsoft said that the new Face API will, “significantly reduce accuracy differences across the demographics” by increasing the facial recognition training data sets. The update initiates a new data collection for people with dark skin tone, from different gender and age. It thus improves its gender classification system by “focusing specifically on getting results for all skin tones.”

“The higher error rates on females with darker skin highlights an industry-wide challenge: Artificial Intelligence technologies are only as good as the data used to train them. If a facial recognition systems is to perform well across all people, the training dataset needs to represent a diversity of skin tones as well factors such as hairstyle, jewelry, and eyewear.”

There is another angle to view this story; the underrepresentation of people of color in the tech community. The facial recognition technology – not just by Microsoft, but across the board – struggle in accurately identifying people of color. The biggest reason behind it is the fact that the creators and first test-subjects for such technologies are rarely people of color. And since it is an artificial intelligence learning from its first interactions with the real world, it will most likely not interact first with people of color.

Then there is the political angle; of course! Like said earlier, Microsoft has been contracted by the ICE and has supplied the agency the Azure Cognitive Services as part of the suite tools in the Azure Government contracts.

Microsoft in January wrote, “This Authority To Operate (ATO) is a critical next step in enabling ICE to deliver such services as cloud-based identity and access, serving both employees and citizens from applications hosted in the cloud.

This can help employees make informed decisions faster, with Azure Government enabling them to process data on edge devices or utilize deep learning capabilities to accelerate facial recognition and identification.”